In our current experiment, around 80 people made predictions on the Euro 2016 football competition. Participants range from 8 to 80 years old, from people who have never watched a match to a BBC football journalist, from Europeans to Americans. Each participant made 36 predictions (one for each of the group matches) in the month before the competition began and is being scored as the results unfold. They told us two factors for each match:

- Who they thought would win

- How confident they are in that answer (ranging from a flip-of-a-coin 50%, to a dead-cert 100%).

But how are we scoring them? Do they only get points if they win? Do we treat experts differently from those less experienced? How is their confidence accounted for? What about draws?

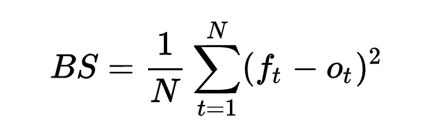

The Brier score

This is where an algorithm called a ‘Brier score’ comes into action.

The method, proposed by Glenn W. Brier in the 1950s, enables us to calculate a person’s accuracy of predictions, and gives them a score between 0 and 1. A score of 0 indicates that a participant declared 100% certainty for every event and got them all correct; a score of 1 reflects a participant who declared 100% certainty for every event but was wrong in all their predictions.

Here’s an example:

For the first three games, I made the following predictions:

- France will beat Romana (60% confidence)

- Switzerland will beat Albania (52% confidence)

- Slovakia will beat Wales (55% confidence)

I was correct in the first two predictions, but Wales beat Slovakia. That gave me a Brier score of 0.23. How was this calculated?

How to calculate a Brier score

Firstly, take the confidence (as a decimal, so 60% becomes 0.6) and subtract 1 if the prediction was correct, or subtract 0 if the prediction was wrong.

- France will beat Romana @ 60% confidence = 0.60 – 1 = -0.4

- Switzerland will beat Albania @ 52% confidence = 0.52 – 1 = -0.48

- Slovakia will beat Wales @ 55% confidence = 0.55-0 = 0.55

Then square each of those sums:

- France will beat Romana @ 60% confidence = -0.4 x -0.4 = 0.16

- Switzerland will beat Albania @ 52% confidence = -0.48 x -0.48 = 0.2304

- Slovakia will beat Wales @ 55% confidence = 0.55 x 0.55 = 0.3025

Then add up the squared numbers:

= 0.16 + 0.2304 + 0.3025 = 0.6929

Then multiply that sum by (1 / sample size):

= 0.6929 x (1/3)

= 0.6929 x 0.333 = 0.23097

Therefore, my Brier score was 0.23

So confidence really matters?

Sure does.

- If you sit on the fence and have 50% confidence in every prediction, then you will have a Brier score of 0.25 regardless of who wins

- If you are correct in every prediction, your Brier score will be somewhere between 0 and 0.25

- If every one of your predictions is wrong, your Brier score will be somewhere between 0.25 and 1

Of course, most people will make a mixture of correct and incorrect predictions, with confidence ratings between 50% and 100% which is what makes it fun.

So why are all the publicised scores are out of 100, not 0 to 1?

I decided that, although many of the participants wanted to know how they were doing against others, they didn’t care enough to read a post on how to calculate Brier scores. So I took the Brier score, inverted it, multiplied it by 100 and gave people a score out of 100 because it’s a more familiar scoring system.

So my Brier score of 0.23097 became 76.90 out of 100.

What about draws?

We ignore draws. It makes our calculation easier and made participation easier by keeping asking binary: team A will win or team B will win. In terms of the calculations, we pretend that the match didn’t take place and that no prediction was made. So my score didn’t change after the England vs Russia draw.

Please note that, for historical accuracy, we need to acknowledge that Brier originally used a score between 0 and 2, but many people (us included) now use the 0 to 1 range.