If I mentioned a ‘Sprint Report’ to most teams, I’d expect a response along the lines of:

A report on what we did in the Sprint? That sounds a bit over the top, doesn’t it?! You’ll be suggesting I do a Gantt chart next!

Although I’d prefer something tangible for users to get their hands on (i.e. a working product or working software) over such documentation, I have used them in the past. And although I haven’t used such a report for about three years, I can accept that they might be useful in some circumstances.

“What is a Sprint Report?”

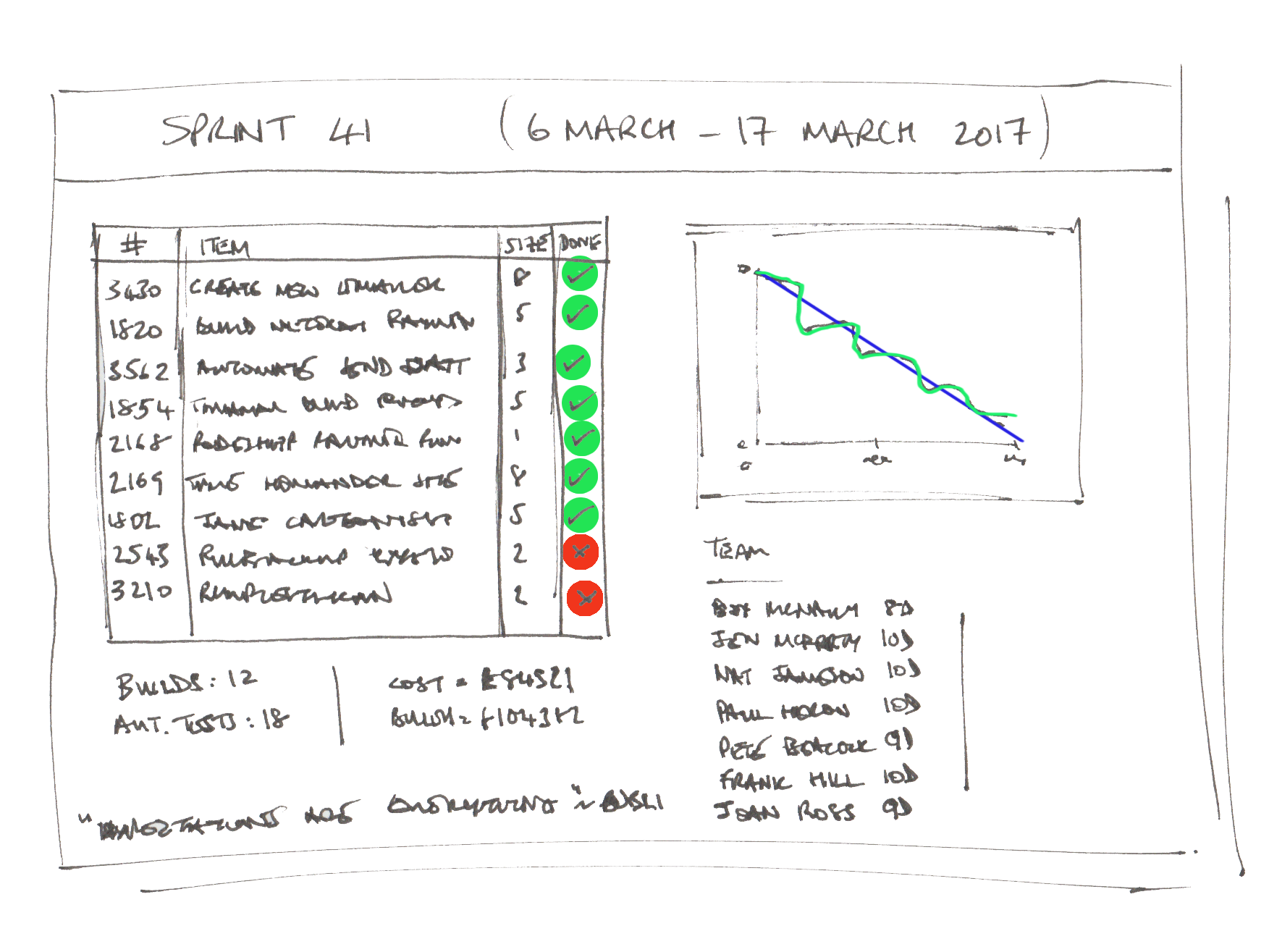

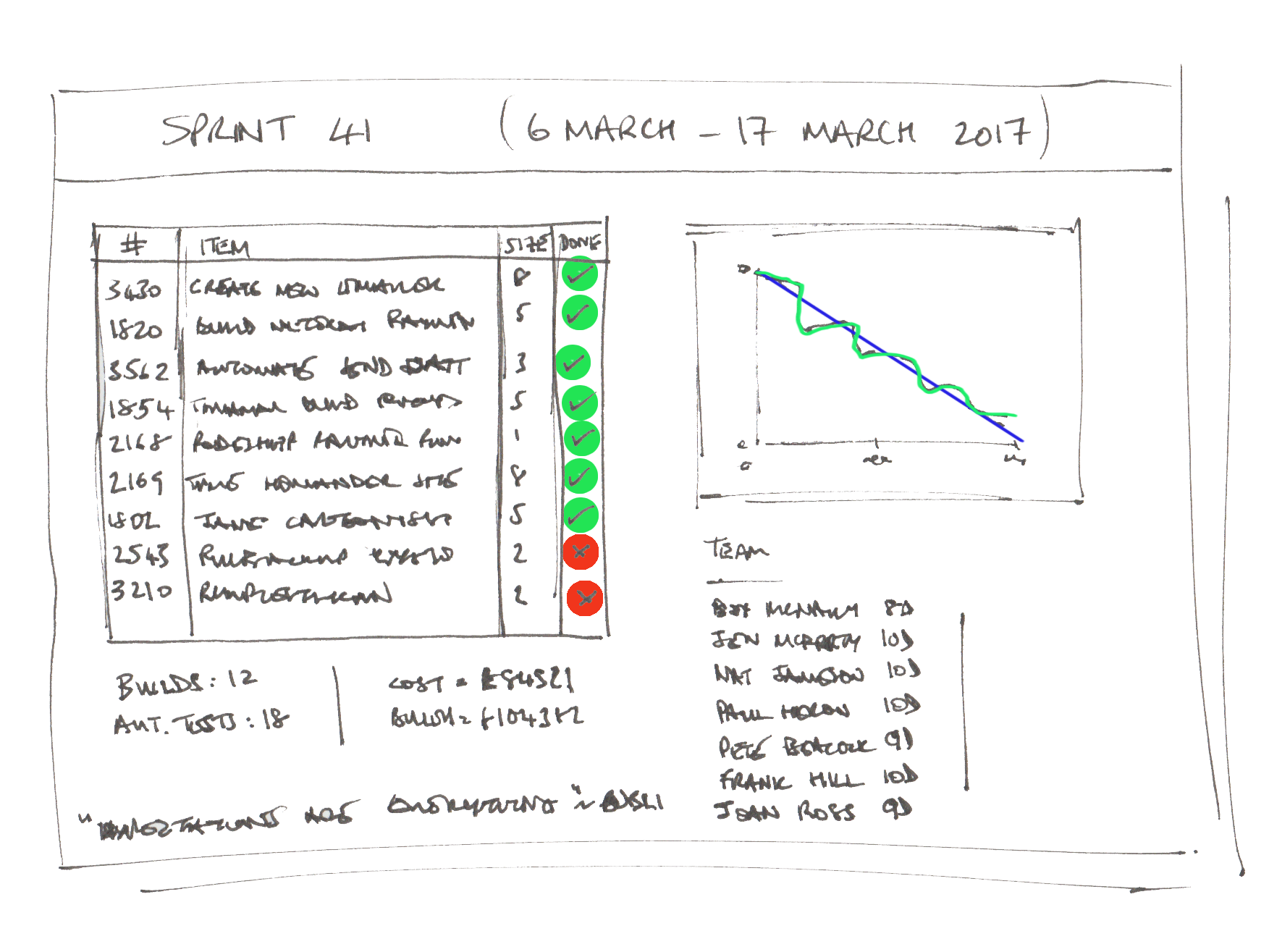

They are a summary of what happened in a Sprint. For example, they might contain:

- The date the Sprint started and the date it ended

- Who was in the team (including how many days each person worked)

- Which stories were in the Sprint Backlog (including their estimate and whether they were completed by the end of the Sprint)

- Metrics (e.g. the team’s current velocity, how many builds happened during the Sprint, how many automated tests were built, how much the team spent / remaining funds, etc)

“Who is a Sprint Report for?”

The team. Sometimes we want to look back at what we’ve done. What if a new team member joins the team? What about calculating the team’s velocity?

Other interested parties. There are often lots of people who are interested in what you’re doing but can’t make a Sprint Review (they may be in another country or time zone, they might be on-the-road when you delivered it, maybe they were on holiday). Don’t they deserve to know what you’ve done recently?

Your clients. Even an engaged client who attends the Sprint Reviews sometimes needs to feed your progress back to their organisation. Imagine, for example, a Product Owner who needs to update their finance department what they’re spending that £1m on. Such a report would help them. What about an agency who needs to invoice the client for work?

“This sounds like hard work”

There are many objections you could throw at all of what I’ve written above, but the main defence is that this report should only take minutes to produce. I’d suggest that it should take between 10-15 minutes (for a 2-week Sprint). You’ve probably got a simple mechanism to report this from whatever story-tracking system you use.

In fact, if you haven’t got an easy way to produce such a report, then maybe that’s even more reason to record this information. Scribbling down this summary at the end of the Sprint might save you a lot of hassle later on.

“I don’t want to use these! You can’t make me!”

I have no intention of forcing anyone to use these. I have no intention of even recommending that you use them. I rarely use them myself. But, if you would benefit from it, at least you know about them now.

“Is there anything you wouldn’t include in the report?”

I saw a blog post by Mike Cohn recently where he discussed whether teams should include actions that come out of their Retrospectives. He, rightly, states that you should be careful if doing this and advises you to get the team to approve there inclusion. I’d go further than this and say you should not include this information. If there are any major learnings the team has had, major problems or blockers that have appeared (or remain), or decisions that the team made during the Sprint, then they may wish to add these. But I’d stay away from adding Retro actions as a matter of course, as this will probably stunt team evolution.